In this newsletter issue, we present 🗞 impactful industry news,💡 interesting AI use cases, 🔬 exciting research developments, and 💻 useful code & tools for practitioners and learners.

🗞 Industry News

Kargu Drone manufactured by Turkish manufacturer STM. A similar model carried out the first reported(!) autonomous drone strike in March 2020. / Mythic's M1076 Chip. / Mozilla studied YouTube recommendations.

💬 Autonomous Drone Warfare already started in 2020

In March 2020, a Kargu-2 drone by Turkish manufacturer STM (stm.com.tr) attacked a group of Haftar forces in Lybia. While countries such as the US have been utilizing drone strikes for two decades now, this reported strike is unique as the UAV carried out the attack fully autonomously - on its own initiative, without a remote operator deciding if or not to attack. Only this year did a Panel of Experts on Libya from the UN Security Council publish a report (undocs.org) on the conflict, bringing to light that the age of autonomous drone warfare had already begun. Since, the Turkish manufacturer has added even more capabilities such as nightmarish swarm operation to its fleet (forbes.com).

Read more on the incident on Gizmodo (gizmodo.com) and more on the legal and ethical implications of autonomous drone warfare in an Articles of War post from Hitoshi Nasu, Professor of international law at the University of Exeter (lieber.westpoint.edu).

🆕 Mythic introduces new analog AI chip that outperforms GPUs

US company Mythic (mythic-ai.com) launched the M1076, a new analog matrix processor for Edge AI applications. Analog circuits require lower electrical currents than their digital counterparts. In combination with dense flash memory, these new chips promise higher energy efficiency and lower cost, outshining graphics processing units (GPUs) that are used today. Mythic recently raised $75M in additional capital and now targets Edge AI deployments, where performance is critical (forbes.com).

👎 Mozilla urges YouTube to fix its recommendations

A recent crowdsourced study led by Mozilla surveyed how often people regret watching a video online. Videos served by YouTube's AI recommendations were the most often regretted. Fear-mongering, misleading or simply false content, inappropriate videos next to children's cartoons, or simply spam is often found in the video platform's recommendations. Non-English speakers report higher rates of regrets. Mozilla urges YouTube and policymakers to take action now. (foundation.mozilla.org)

💡 AI Use Cases

👴 AI in Elder Care: Intelligent surveillance system of patients with dementia

With "Automating Care" a new series details the advances of AI in caregiving. In this latest article, The Guardian interviews companies that provide intelligent surveillance solutions for the home and the seniors living in it. (Read the full article on theguardian.com)

"[An AI System connected to] sensors at the top of doors and in some rooms monitor movements and learn the pair’s daily activity patterns, sending warning alerts to Franklin’s phone if her dad’s normal behavior deviates – for instance if he goes outside and doesn’t return quickly."

Cherry Labs' Cherry Home sees a stumbling elderly patient in its surveillance system / Venturebeat

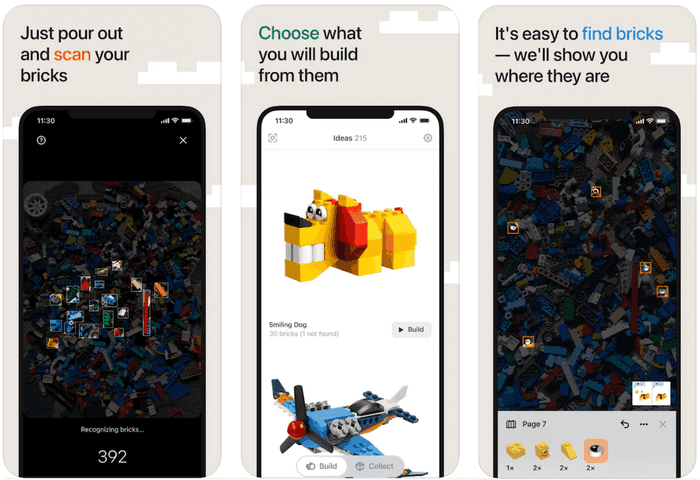

🧱 AI in Nursery: Detect what can be built from a pile of Legos

With the new iOS app Brickit (brickit.app), building Lego structures becomes easier than ever. The app uses the phone's camera to identify and count Lego bricks in a pile, then figures out what figures can be built. It even highlights the location of individual pieces within the pile. Download the free app on any iOS device and try yourself (apps.apple.com).

Brickit

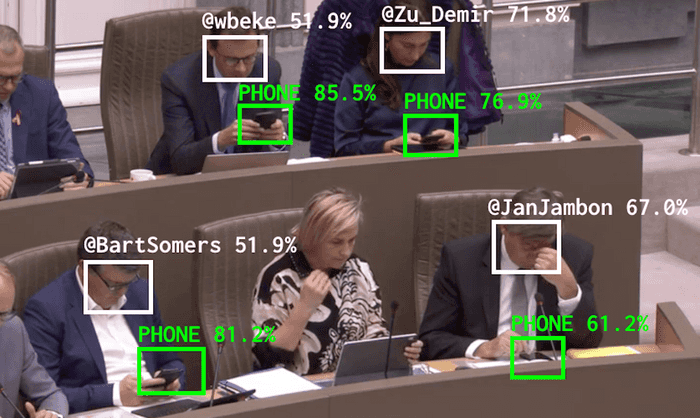

📵 AI in Parliament: Automatically tagging politicians in parliament when they are too distracted by their phones

Every meeting of the Flemish government in Belgium is live streamed to the web. An AI released by Belgian tech artist Dries Depoorter scans the video stream for phones and tries to identify a distracted politician via object and face recognition. Short clips of the distracted politician are then posted to a Twitter and Instagram account with the politician tagged. "The Flemish Scrollers" was introduced in the artist's blog (driesdeporrter.be).

The Flemish Scrollers / Dries Depoorter

🧠 AI in Healthcare: Allowing patients with paralysis to speak

"Researchers at UC San Francisco have successfully developed a 'speech neuroprosthesis' that has enabled a man with severe paralysis to communicate in sentences, translating signals from his brain to the vocal tract directly into words that appear as text on a screen." (uscf.edu)

Neural networks decoded brain signals to speech, allowing a man with paralysis to speak 18 words per minute compared to 150-200 wpm normally. Previous work, such as Neuralink's implant (we reported in an earlier newsletter), focused on restoring communication by typing out letters one-by-one. However, this recent work from UC San Francisco directly translates brain signals to words instead of individual letters.

UC San Francisco researchers translated brain signals into words using neural networks.

🔬 Research

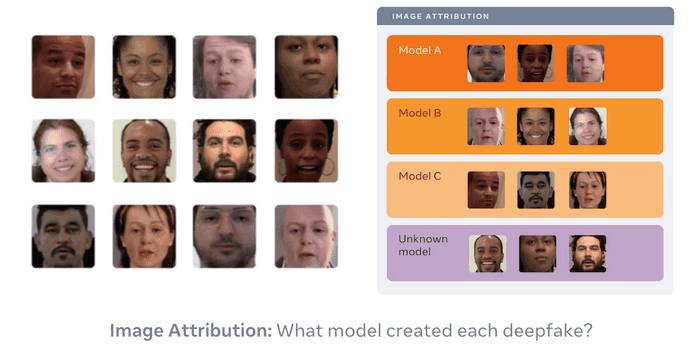

🥸 Reverse engineering generative models from a single deepfake image

The recent deepfake of Tom Cruise (youtube.com) reminded us that deepfake detection is becoming ever more critical. Researchers from Michigan State University and Facebook AI go one step further: They try to attribute which generative model created a deepfake by deriving its hyperparameters from a single image. Read the full paper (arxiv.org) or the more accessible blog post (ai.facebook.com).

Reverse engineering generative models from a single deepfake image / FAIR

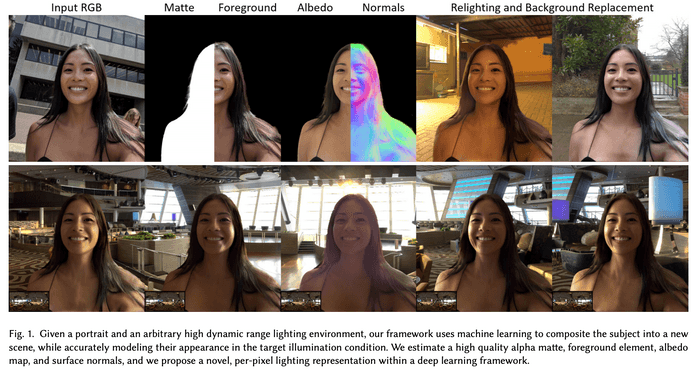

🤳 Total Relighting: Learning to relight portraits for background replacement

Google researchers developed an AI that matches the lighting in video calls to virtual backgrounds. While not yet working in real-time, the results below are undoubtedly impressive. The paper is available on GitHub (github.io). In addition, I recommend watching YouTuber Two Minute Papers' video summary (youtube.com).

ZOOM IN / Pandey et al. / Google AI

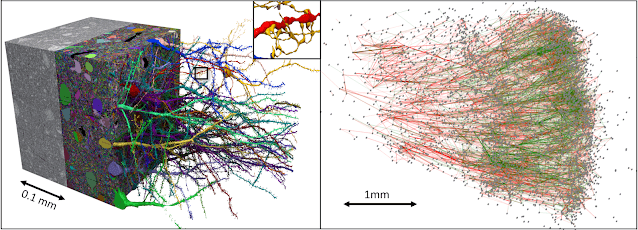

🧠 Explore brain tissue in super high resolution

Another research team has mapped a part of the human cortex in an unprecedentedly high resolution (biorxiv.org). The data covers a mere cubic millimeter of brain tissue, but its reconstruction accumulates to a whopping 1.4 petabyte. As your computer is probably unable to store this, Google hosts an easily accessible brain map via their Neuroglancer browser interface (neuroglancer-demo).

One cubic millimeter of brain tissue digitalized as 1.4 petabyte / Shapson-Coe et al. / Google AI Blog

💻 Code & Tools

🤖 GitHub and OpenAI launch Copilot, an AI pair programmer

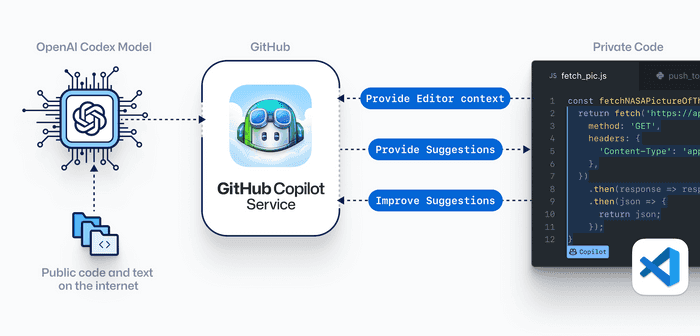

Since Microsoft bought a share in research lab OpenAI, their collaboration is ever intensifying. For example, OpenAI has utilized billions of lines of public code hosted on Microsoft-owned GitHub to train a generative model nicknamed "Codex". Codex now powers GitHub Copilot, a coding assistant that can do much more than auto-complete. Signup and download the technical preview now (copilot.github.com). Internally, we are still comparing Copilot with the already available AI coding assistant Kite (kite.com).

Copilot

📚 Facebook open-sources useful libraries

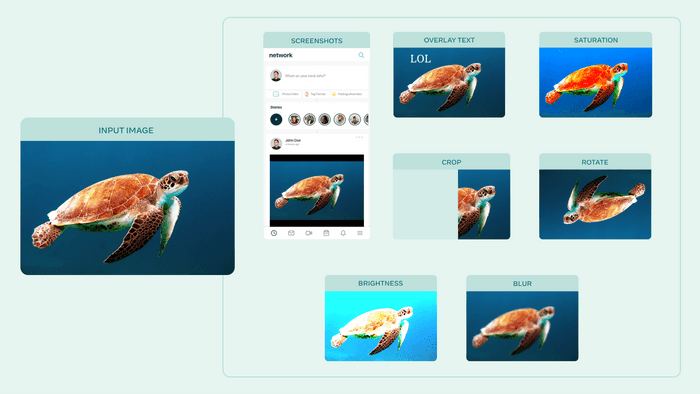

Facebook AI open-sourced both AugLy (github.com), a powerful data augmentation library, and Kats (github.com), a framework for time series analysis. The new augmentation tools can not just stretch and rotate images but also embed them in social media feeds or overlay them with meme-ish text, as seen below. AugLy works not just on images but also includes utilities for audio, video, and text.

AugLy: A new data augmentation library to help build more robust AI models / FAIR

📆 Events

📅 CVPR 2021 (past)

CVPR, the most important computer vision conference, was held virtually last month. At this year's Conference on Computer Vision and Pattern Recognition, 1660 papers were accepted compared to 1467 papers the previous year. The acceptance rate increased from 22.1% to 23.7%.

A personal highlight was a workshop with Andrej Karpathy, Teslas Chief of AI, which you can watch on YouTube. At the 4:50 mark, Karpathy compares Lidar with the vision-only approach that Tesla uses (youtube.com).

Andrej Karpathy at CVPR 2021

📅 ICML 2021 (July 18-24)

Another prominent Machine Learning conference starts next week: The 38th ICML, International Conference on Machine Learning (icml.cc). Learn more about other important AI conferences in our blog post (am.ai).

ICML